openai/clip-vit-large-patch14 cannot be traced with torch_tensorrt.compile · Issue #367 · openai/CLIP · GitHub

open_clip: Welcome to an open source implementation of OpenAI's CLIP (Contrastive Language-Image Pre-training).

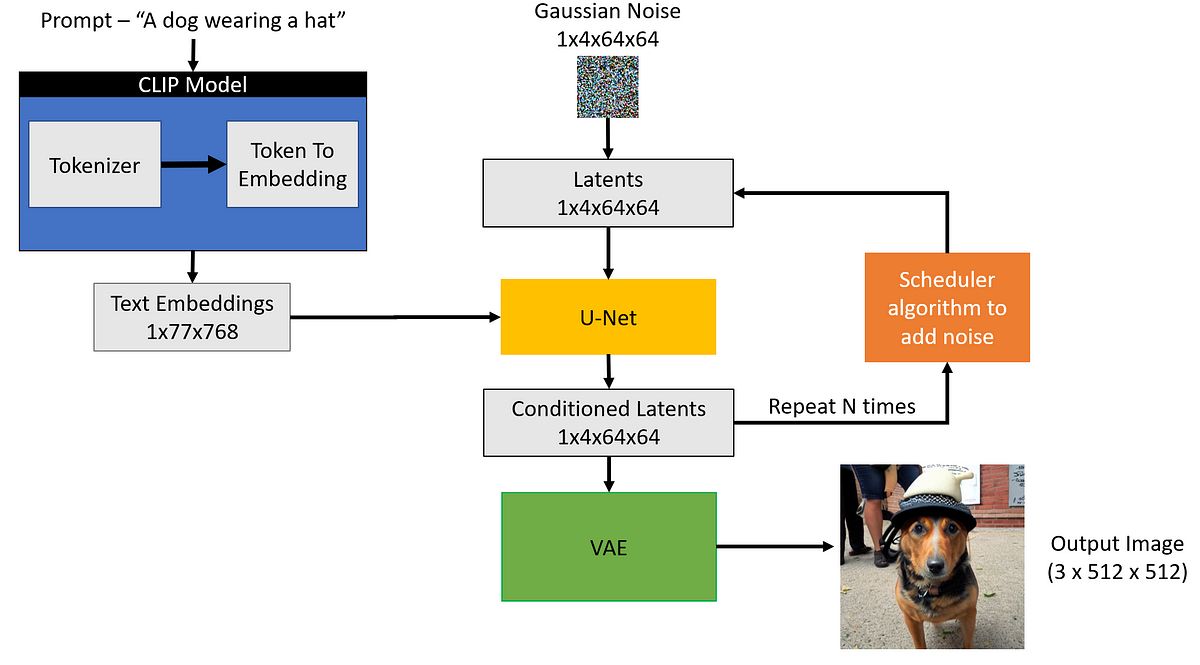

Can't load tokenizer for 'openai/clip-vit-large-patch14' · Issue #659 · CompVis/stable-diffusion · GitHub

Can't load the model for 'openai/clip-vit-large-patch14'. · Issue #436 · CompVis/stable-diffusion · GitHub

New Fashion Large Geometry Acetic Acid Hair Claw Clip For Women Tortoise Shell Multicolor Acetate Clip Hairpin - Temu Germany

Mastering the Huggingface CLIP Model: How to Extract Embeddings and Calculate Similarity for Text and Images | Code and Life

bug】Some weights of the model checkpoint at openai/clip-vit-large-patch14 were not used when initializing CLIPTextModel · Issue #273 · kohya-ss/sd-scripts · GitHub

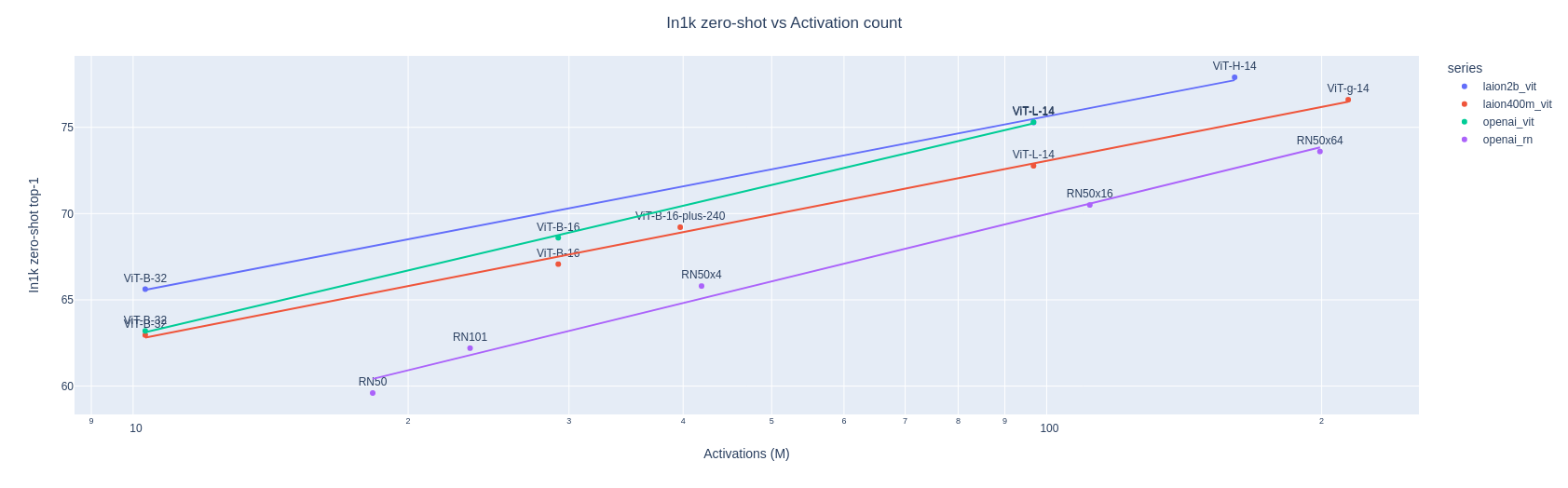

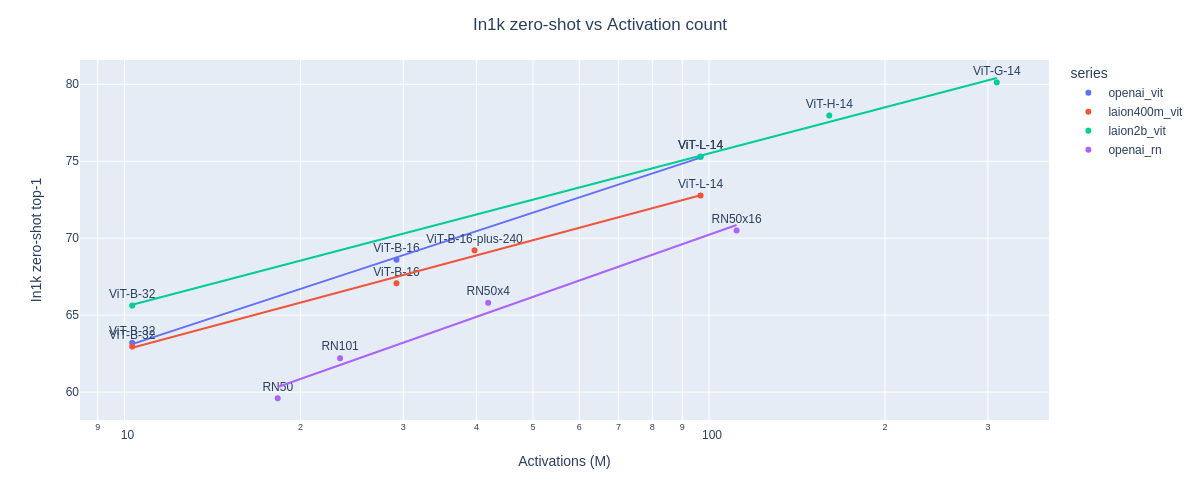

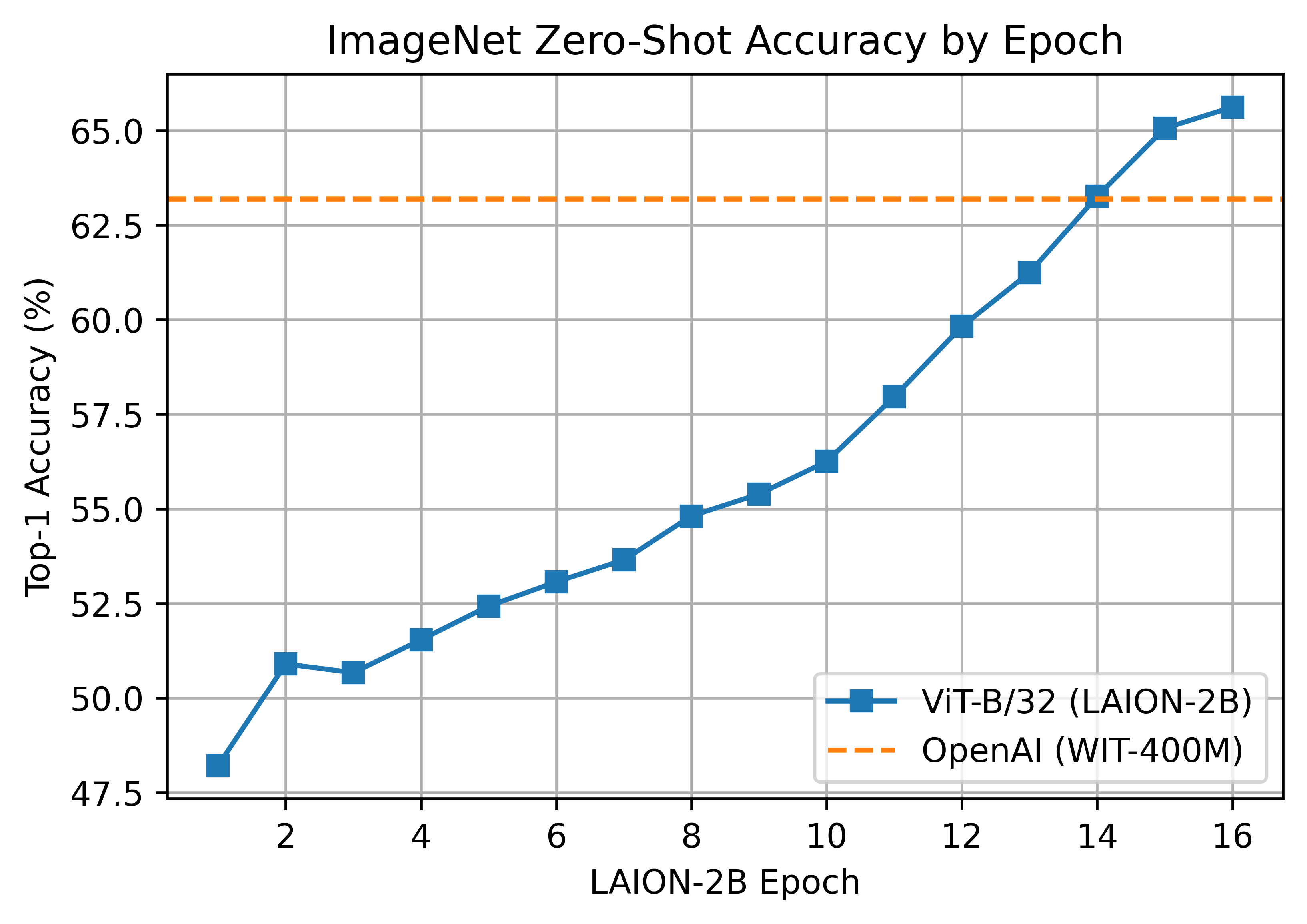

Aran Komatsuzaki on X: "+ our own CLIP ViT-B/32 model trained on LAION-400M that matches the performance of OpenaI's CLIP ViT-B/32 (as a taste of much bigger CLIP models to come). search